Tech Prism 923880161 Dynamic Flow

Tech Prism 923880161 Dynamic Flow offers a structured approach to real-time adaptation, emphasizing autonomy, modularity, and auditable governance. It translates complexity into composable insights through edge-aware processing and layered orchestration. The framework targets low-latency decisions, proactive fault detection, and scalable, responsible data use across industries. Yet questions remain about how governance, UX, and data lineage evolve as goals shift and pipelines scale in diverse environments. The implications invite closer inspection.

What Is Tech Prism 923880161 Dynamic Flow and Why It Matters

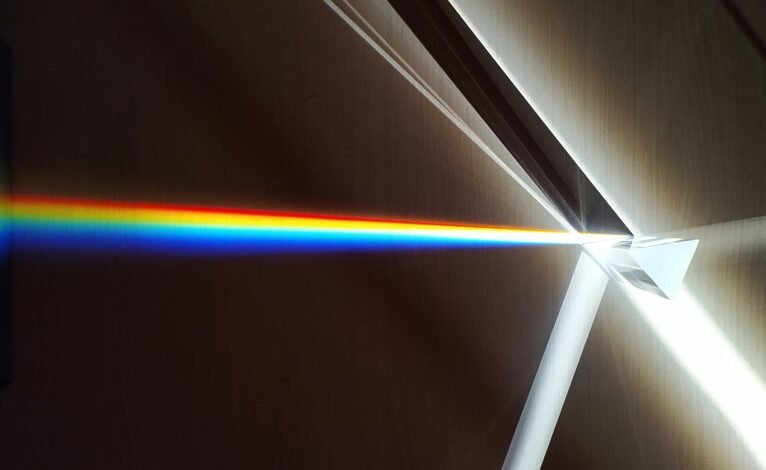

Tech Prism 923880161 Dynamic Flow refers to a structured approach for understanding how modern systems adapt in real time to shifting inputs, constraints, and objectives.

It emphasizes autonomy, modularity, and resilience, translating complexity into composable insights.

Tech Prism highlights how Dynamic Flow guides decision-making, design, and governance, enabling flexible alignment with evolving goals while maintaining clarity and purposeful constraint.

How Dynamic Flow Handles Real-Time Data at Scale

Dynamic Flow scales real-time data handling by decomposing streams into modular, interoperable components that can be observed, governed, and adjusted independently. It achieves real time scaling through layered buffering, asynchronous orchestration, and provenance trails, allowing systems to adapt without disruption.

Edge aware processing shifts workload toward proximal nodes, reducing latency while maintaining consistency, observability, and auditable behavior across distributed pipelines.

Practical Use Cases Across Industries With Edge-Aware Processing

Edge-aware processing unlocks targeted efficiency across sectors, enabling real-time insights at the source while preserving global consistency. Across manufacturing, logistics, healthcare, and energy, applications demonstrate reduced latency, enhanced fault detection, and workload balance. Real time analytics empower immediate decisions, while edge orchestration coordinates distributed nodes for resilient pipelines. These patterns illustrate practical, scalable value without sacrificing data sovereignty or governance.

Getting Started: Best Practices, Governance, and UX for Pipelines

As organizations expand edge-aware pipelines across industries, establishing clear governance, best practices, and user-centric UX becomes foundational to sustained reliability and compliance.

The discussion emphasizes governance frameworks, disciplined deployment, and measurable quality metrics, enabling teams to iterate with confidence.

Focusing on best practices, governance, and ux for pipelines, teams must design for real time data at scale, ensuring resilient, freedom-friendly architectures.

Conclusion

Tech Prism 923880161 Dynamic Flow emerges as a coincidence of modular design and real-time needs, where autonomous orchestration unexpectedly aligns with governance. The framework’s edge-aware processing reveals patterns only when systems intersect—data streams, devices, and decisions converge. This serendipitous alignment yields low latency, auditable lineage, and scalable resilience. In practice, organizations discover that disciplined modularity and proactive fault detection are not separate goals but two sides of the same, converging path toward robust, data-driven outcomes.